Introduction #

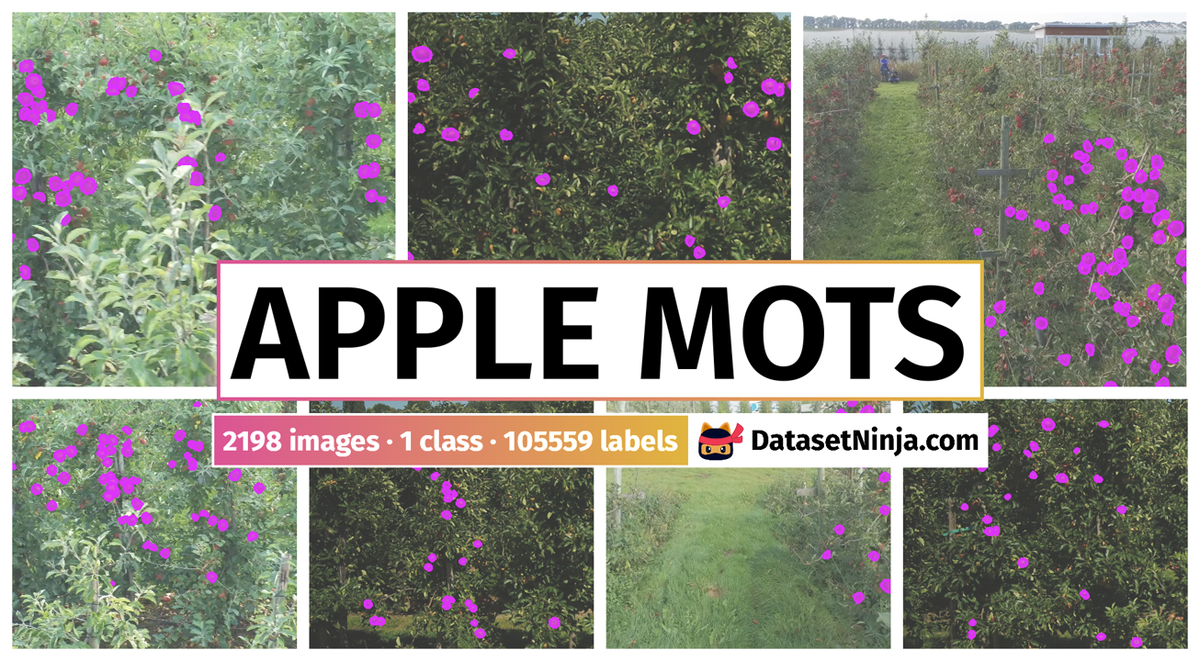

The Apple MOTS: Multiple Object Tracking and Segmentation in an Apple Orchard Field dataset comprises temporally synchronized images and labels of apples, captured using UAVs (Unmanned Aerial Vehicles) and a wearable sensor in an orchard. It includes a total of 86,000 manually annotated apple instances and 1,700 frames annotated following the MOTS (Multi-object Tracking and Segmentation) style. Sequences 0 to 5 are allocated for training, while sequences 6 to 8 are designated for testing and validation. Sequences 10 to 12 represent the testing datasets, which feature overlays for “ignore regions.”

Summary #

Dataset for Benchmarking Multiple Object Tracking and Segmentation (MOTS) in an Apple Orchard Field. is a dataset for instance segmentation, semantic segmentation, and object detection tasks. It is used in the agricultural industry.

The dataset consists of 2198 images with 105559 labeled objects belonging to 1 single class (apple).

Images in the Apple MOTS dataset have pixel-level instance segmentation annotations. Due to the nature of the instance segmentation task, it can be automatically transformed into a semantic segmentation (only one mask for every class) or object detection (bounding boxes for every object) tasks. All images are labeled (i.e. with annotations). There are 2 splits in the dataset: train (1147 images) and testing (1051 images). Additionally, it could be split into 12 scenes. The dataset was released in 2022 by the Wageningen University & Research. Netherlands.

Explore #

Apple MOTS dataset has 2198 images. Click on one of the examples below or open "Explore" tool anytime you need to view dataset images with annotations. This tool has extended visualization capabilities like zoom, translation, objects table, custom filters and more. Hover the mouse over the images to hide or show annotations.

Class balance #

There are 1 annotation classes in the dataset. Find the general statistics and balances for every class in the table below. Click any row to preview images that have labels of the selected class. Sort by column to find the most rare or prevalent classes.

Class ㅤ | Images ㅤ | Objects ㅤ | Count on image average | Area on image average |

|---|---|---|---|---|

apple➔ mask | 2198 | 105559 | 48.03 | 2.31% |

Images #

Explore every single image in the dataset with respect to the number of annotations of each class it has. Click a row to preview selected image. Sort by any column to find anomalies and edge cases. Use horizontal scroll if the table has many columns for a large number of classes in the dataset.

Class sizes #

The table below gives various size properties of objects for every class. Click a row to see the image with annotations of the selected class. Sort columns to find classes with the smallest or largest objects or understand the size differences between classes.

Class | Object count | Avg area | Max area | Min area | Min height | Min height | Max height | Max height | Avg height | Avg height | Min width | Min width | Max width | Max width |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

apple mask | 105559 | 0.05% | 0.46% | 0% | 1px | 0.1% | 111px | 11.42% | 28px | 2.89% | 1px | 0.08% | 92px | 7.1% |

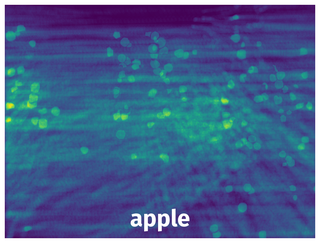

Spatial Heatmap #

The heatmaps below give the spatial distributions of all objects for every class. These visualizations provide insights into the most probable and rare object locations on the image. It helps analyze objects' placements in a dataset.

Objects #

Table contains all 105559 objects. Click a row to preview an image with annotations, and use search or pagination to navigate. Sort columns to find outliers in the dataset.

Object ID ㅤ | Class ㅤ | Image name click row to open | Image size height x width | Height ㅤ | Height ㅤ | Width ㅤ | Width ㅤ | Area ㅤ |

|---|---|---|---|---|---|---|---|---|

1➔ | apple mask | 0004_000381.png | 972 x 1296 | 37px | 3.81% | 38px | 2.93% | 0.09% |

2➔ | apple mask | 0004_000381.png | 972 x 1296 | 36px | 3.7% | 38px | 2.93% | 0.08% |

3➔ | apple mask | 0004_000381.png | 972 x 1296 | 38px | 3.91% | 39px | 3.01% | 0.1% |

4➔ | apple mask | 0004_000381.png | 972 x 1296 | 30px | 3.09% | 21px | 1.62% | 0.04% |

5➔ | apple mask | 0004_000381.png | 972 x 1296 | 56px | 5.76% | 44px | 3.4% | 0.12% |

6➔ | apple mask | 0004_000381.png | 972 x 1296 | 13px | 1.34% | 25px | 1.93% | 0.02% |

7➔ | apple mask | 0004_000381.png | 972 x 1296 | 24px | 2.47% | 32px | 2.47% | 0.04% |

8➔ | apple mask | 0004_000381.png | 972 x 1296 | 23px | 2.37% | 22px | 1.7% | 0.03% |

9➔ | apple mask | 0004_000381.png | 972 x 1296 | 22px | 2.26% | 27px | 2.08% | 0.04% |

10➔ | apple mask | 0004_000381.png | 972 x 1296 | 31px | 3.19% | 31px | 2.39% | 0.06% |

License #

Citation #

If you make use of the Apple orchard field MOTS data, please cite the following reference:

@dataset{jong_stefan_de_2022_5939726,

author = {Jong, Stefan de and

Baja, Hilmy and

Valente, João},

title = {{Dataset for benchmarking Multiple Object Tracking

and Segmentation (MOTS) in an apple orchard field.}},

month = feb,

year = 2022,

publisher = {Zenodo},

doi = {10.5281/zenodo.5939726},

url = {https://doi.org/10.5281/zenodo.5939726}

}

If you are happy with Dataset Ninja and use provided visualizations and tools in your work, please cite us:

@misc{ visualization-tools-for-apple-mots-dataset,

title = { Visualization Tools for Apple MOTS Dataset },

type = { Computer Vision Tools },

author = { Dataset Ninja },

howpublished = { \url{ https://datasetninja.com/apple-mots } },

url = { https://datasetninja.com/apple-mots },

journal = { Dataset Ninja },

publisher = { Dataset Ninja },

year = { 2026 },

month = { jun },

note = { visited on 2026-06-10 },

}Download #

Dataset Apple MOTS can be downloaded in Supervisely format:

As an alternative, it can be downloaded with dataset-tools package:

pip install --upgrade dataset-tools

… using following python code:

import dataset_tools as dtools

dtools.download(dataset='Apple MOTS', dst_dir='~/dataset-ninja/')

Make sure not to overlook the python code example available on the Supervisely Developer Portal. It will give you a clear idea of how to effortlessly work with the downloaded dataset.

The data in original format can be downloaded here.

Disclaimer #

Our gal from the legal dep told us we need to post this:

Dataset Ninja provides visualizations and statistics for some datasets that can be found online and can be downloaded by general audience. Dataset Ninja is not a dataset hosting platform and can only be used for informational purposes. The platform does not claim any rights for the original content, including images, videos, annotations and descriptions. Joint publishing is prohibited.

You take full responsibility when you use datasets presented at Dataset Ninja, as well as other information, including visualizations and statistics we provide. You are in charge of compliance with any dataset license and all other permissions. You are required to navigate datasets homepage and make sure that you can use it. In case of any questions, get in touch with us at hello@datasetninja.com.