Introduction #

The COCO 2017 dataset is a component of the extensive Microsoft COCO dataset. To learn more about this dataset, you can visit its homepage. The creators of this dataset, in their pursuit of advancing object recognition, have placed their focus on the broader concept of scene comprehension. This vision is realized through the compilation of images depicting intricate everyday scenes where common objects naturally exist. Within the Microsoft COCO dataset, you will find photographs encompassing 91 different types of objects, all of which are easily identifiable by a four-year-old. This comprehensive dataset includes a grand total of 2.5 million labeled instances distributed across 328,000 images. It’s worth noting that in COCO 2017, specifically, you’ll encounter 1.8 million labeled instances within 164,000 images, and the number of classes differ.

The dataset addresses three core research problems in scene understanding: detecting non-iconic views (or non-canonical perspectives) of objects, contextual reasoning between objects and the precise 2D localization of objects he selection of object categories is a non-trivial exercise. The categories must form a representative set of all categories, be relevant to practical applications and occur with high enough frequency to enable the collection of a large dataset.

Other important decisions are whether to include both “thing” and “stuff” categories and whether fine-grained and object-part categories should be included. “Thing” categories include objects for which individual instances may be easily labeled (person, chair, car) whereas “stuff” categories include materials and objects with no clear boundaries (sky, street, grass). Authors decided to only include “thing” categories and not “stuff” because they are primarily interested in the precise localization of object instances. Check out the COCO-Stuff 164k for details

The specificity of object categories can vary significantly. For instance, a dog could be a member of the “mammal”, “dog”, or “German shepherd” categories. To enable the practical collection of a significant number of instances per category, authors chose to limit our dataset to entry-level categories, i.e. category labels that are commonly used by humans when describing objects (dog, chair, person). It is also possible that some object categories may be parts of other object categories. For instance, a face may be part of a person. Authors anticipate the inclusion of object-part categories (face, hands, wheels) would be beneficial for many real-world applications.

Summary #

COCO 2017: Common Objects in Context 2017 is a dataset for instance segmentation, semantic segmentation, and object detection tasks. It is applicable or relevant across various domains.

The dataset consists of 163957 images with 2099063 labeled objects belonging to 80 different classes including person, chair, car, and other: dining table, cup, bottle, bowl, handbag, truck, bench, backpack, book, cell phone, sink, clock, tv, potted plant, couch, dog, knife, sports ball, traffic light, cat, umbrella, bus, tie, bed, train, and 52 more.

Images in the COCO 2017 dataset have pixel-level instance segmentation and bounding box annotations. Due to the nature of the instance segmentation task, it can be automatically transformed into a semantic segmentation task (only one mask for every class). There are 41739 (25% of the total) unlabeled images (i.e. without annotations). There are 3 splits in the dataset: train2017 (118287 images), test2017 (40670 images), and val2017 (5000 images). The dataset was released in 2017 by the COCO Consortium.

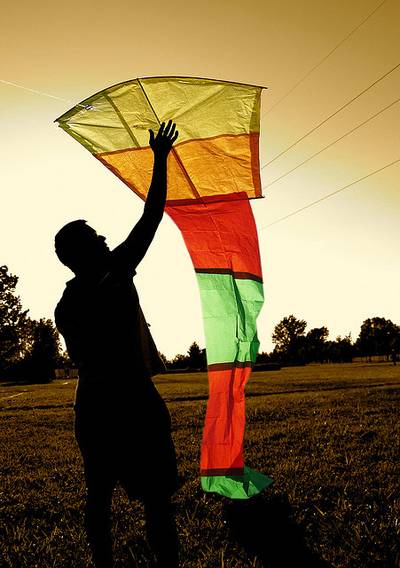

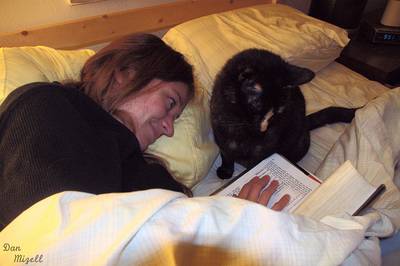

Here is a visualized example for randomly selected sample classes:

Explore #

COCO 2017 dataset has 163957 images. Click on one of the examples below or open "Explore" tool anytime you need to view dataset images with annotations. This tool has extended visualization capabilities like zoom, translation, objects table, custom filters and more. Hover the mouse over the images to hide or show annotations.

Class balance #

There are 80 annotation classes in the dataset. Find the general statistics and balances for every class in the table below. Click any row to preview images that have labels of the selected class. Sort by column to find the most rare or prevalent classes.

Class ㅤ | Images ㅤ | Objects ㅤ | Count on image average | Area on image average |

|---|---|---|---|---|

person➔ any | 66808 | 649193 | 9.72 | 29.33% |

chair➔ any | 13354 | 98848 | 7.4 | 11.35% |

car➔ any | 12786 | 110156 | 8.62 | 8.71% |

dining table➔ any | 12338 | 44412 | 3.6 | 43.66% |

cup➔ any | 9579 | 44616 | 4.66 | 4.98% |

bottle➔ any | 8880 | 55003 | 6.19 | 4.23% |

bowl➔ any | 7425 | 31719 | 4.27 | 15.96% |

handbag➔ any | 7133 | 28871 | 4.05 | 3.54% |

truck➔ any | 6377 | 23846 | 3.74 | 16.8% |

bench➔ any | 5805 | 28267 | 4.87 | 12.96% |

Images #

Explore every single image in the dataset with respect to the number of annotations of each class it has. Click a row to preview selected image. Sort by any column to find anomalies and edge cases. Use horizontal scroll if the table has many columns for a large number of classes in the dataset.

Class sizes #

The table below gives various size properties of objects for every class. Click a row to see the image with annotations of the selected class. Sort columns to find classes with the smallest or largest objects or understand the size differences between classes.

Class | Object count | Avg area | Max area | Min area | Min height | Min height | Max height | Max height | Avg height | Avg height | Min width | Min width | Max width | Max width |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

person any | 649193 | 5.07% | 100% | 0% | 1px | 0.21% | 640px | 100% | 117px | 24.68% | 1px | 0.16% | 640px | 100% |

car any | 110156 | 1.78% | 100% | 0% | 2px | 0.31% | 640px | 100% | 44px | 9.42% | 2px | 0.31% | 640px | 100% |

chair any | 98848 | 2.46% | 100% | 0% | 2px | 0.31% | 640px | 100% | 75px | 16.03% | 1px | 0.16% | 640px | 100% |

book any | 56131 | 1.53% | 100% | 0% | 1px | 0.21% | 633px | 100% | 51px | 10.91% | 2px | 0.31% | 640px | 100% |

bottle any | 55003 | 1.17% | 99.38% | 0% | 2px | 0.31% | 640px | 100% | 67px | 13.85% | 2px | 0.31% | 640px | 100% |

cup any | 44616 | 1.87% | 100% | 0% | 2px | 0.31% | 633px | 100% | 65px | 13.45% | 2px | 0.31% | 640px | 100% |

dining table any | 44412 | 21.17% | 100% | 0% | 1px | 0.24% | 640px | 100% | 169px | 34.81% | 2px | 0.31% | 640px | 100% |

bowl any | 31719 | 6.67% | 100% | 0% | 2px | 0.42% | 640px | 100% | 88px | 18.22% | 2px | 0.42% | 640px | 100% |

skis any | 29926 | 0.63% | 74.11% | 0% | 1px | 0.16% | 629px | 100% | 34px | 7.03% | 1px | 0.16% | 634px | 99.79% |

handbag any | 28871 | 1.36% | 99.38% | 0% | 2px | 0.42% | 582px | 100% | 60px | 12.61% | 1px | 0.2% | 640px | 100% |

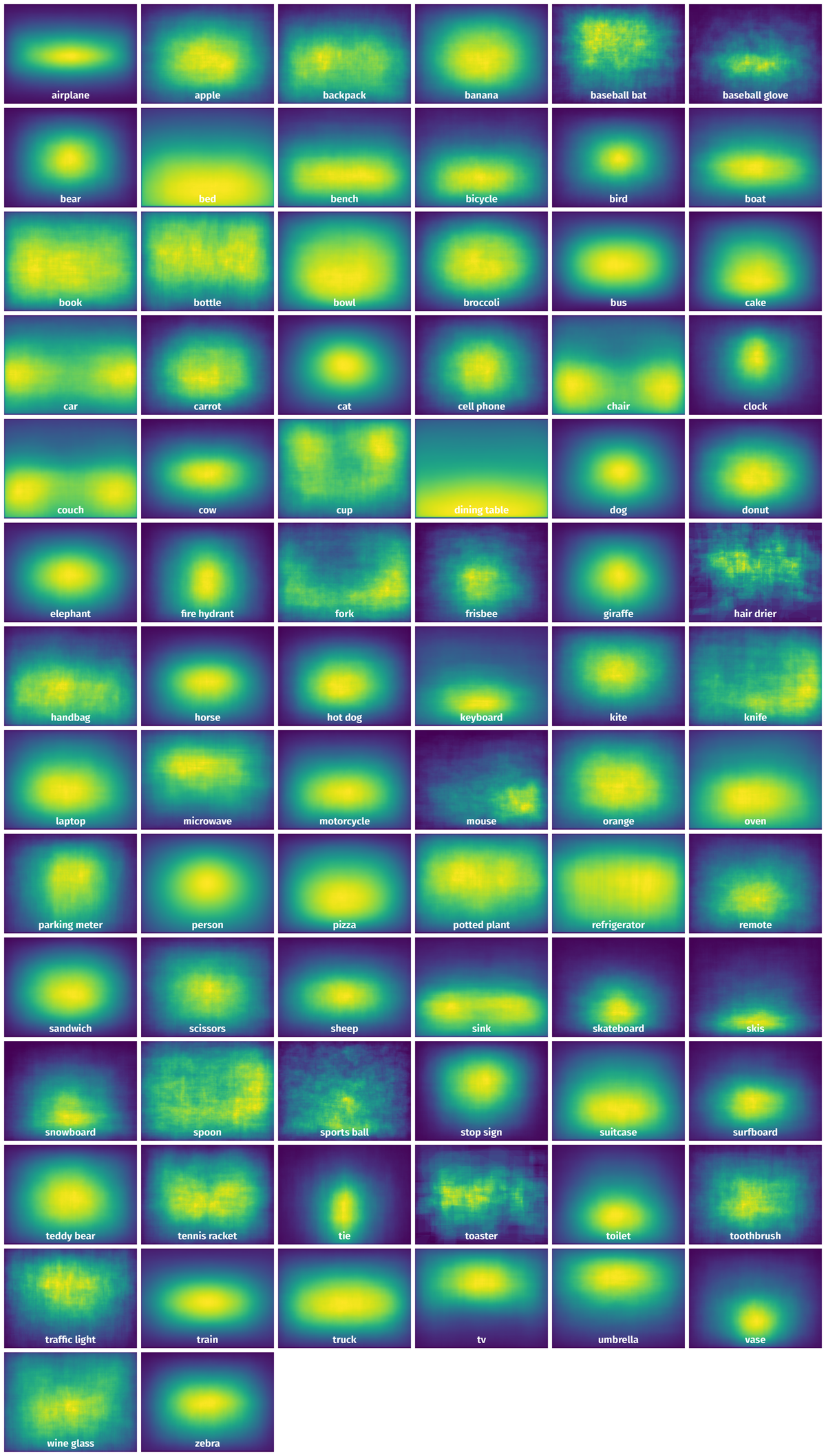

Spatial Heatmap #

The heatmaps below give the spatial distributions of all objects for every class. These visualizations provide insights into the most probable and rare object locations on the image. It helps analyze objects' placements in a dataset.

Objects #

Table contains all 102042 objects. Click a row to preview an image with annotations, and use search or pagination to navigate. Sort columns to find outliers in the dataset.

Object ID ㅤ | Class ㅤ | Image name click row to open | Image size height x width | Height ㅤ | Height ㅤ | Width ㅤ | Width ㅤ | Area ㅤ |

|---|---|---|---|---|---|---|---|---|

1➔ | tv any | 000000301421.jpg | 427 x 640 | 128px | 29.98% | 152px | 23.75% | 5.91% |

2➔ | tv any | 000000301421.jpg | 427 x 640 | 128px | 29.98% | 152px | 23.75% | 7.12% |

3➔ | chair any | 000000301421.jpg | 427 x 640 | 135px | 31.62% | 178px | 27.81% | 4.83% |

4➔ | chair any | 000000301421.jpg | 427 x 640 | 135px | 31.62% | 178px | 27.81% | 8.79% |

5➔ | cell phone any | 000000301421.jpg | 427 x 640 | 59px | 13.82% | 35px | 5.47% | 0.47% |

6➔ | cell phone any | 000000301421.jpg | 427 x 640 | 59px | 13.82% | 35px | 5.47% | 0.76% |

7➔ | laptop any | 000000301421.jpg | 427 x 640 | 136px | 31.85% | 255px | 39.84% | 6.07% |

8➔ | laptop any | 000000301421.jpg | 427 x 640 | 136px | 31.85% | 255px | 39.84% | 12.69% |

9➔ | keyboard any | 000000301421.jpg | 427 x 640 | 65px | 15.22% | 121px | 18.91% | 1.34% |

10➔ | keyboard any | 000000301421.jpg | 427 x 640 | 65px | 15.22% | 121px | 18.91% | 2.88% |

License #

Citation #

If you make use of the COCO 2017 data, please cite the following reference:

@dataset{COCO 2017,

author={Tsung-Yi and Genevieve Patterson and Matteo R. Ronchi and Yin Cui and Michael Maire and Serge Belongie and Lubomir Bourdev and Ross Girshick and James Hays Georgia and Pietro Perona and Deva Ramanan and Larry Zitnick and Piotr Dollár},

title={COCO 2017: Common Objects in Context 2017},

year={2017},

url={https://cocodataset.org/#home}

}

If you are happy with Dataset Ninja and use provided visualizations and tools in your work, please cite us:

@misc{ visualization-tools-for-coco-dataset,

title = { Visualization Tools for COCO 2017 Dataset },

type = { Computer Vision Tools },

author = { Dataset Ninja },

howpublished = { \url{ https://datasetninja.com/coco-2017 } },

url = { https://datasetninja.com/coco-2017 },

journal = { Dataset Ninja },

publisher = { Dataset Ninja },

year = { 2026 },

month = { jun },

note = { visited on 2026-06-04 },

}Download #

Dataset COCO 2017 can be downloaded in Supervisely format:

As an alternative, it can be downloaded with dataset-tools package:

pip install --upgrade dataset-tools

… using following python code:

import dataset_tools as dtools

dtools.download(dataset='COCO 2017', dst_dir='~/dataset-ninja/')

Make sure not to overlook the python code example available on the Supervisely Developer Portal. It will give you a clear idea of how to effortlessly work with the downloaded dataset.

The data in original format can be downloaded here:

Disclaimer #

Our gal from the legal dep told us we need to post this:

Dataset Ninja provides visualizations and statistics for some datasets that can be found online and can be downloaded by general audience. Dataset Ninja is not a dataset hosting platform and can only be used for informational purposes. The platform does not claim any rights for the original content, including images, videos, annotations and descriptions. Joint publishing is prohibited.

You take full responsibility when you use datasets presented at Dataset Ninja, as well as other information, including visualizations and statistics we provide. You are in charge of compliance with any dataset license and all other permissions. You are required to navigate datasets homepage and make sure that you can use it. In case of any questions, get in touch with us at hello@datasetninja.com.